AI has officially entered the enterprise era, and Databricks is a catalyst in this momentum.

At this year’s Data + AI Summit in San Francisco, Databricks introduced a wave of new capabilities aimed at one critical goal: making AI truly production-ready. Whether it’s deploying modular agents, simplifying data workflows, or building next-gen intelligent applications — Databricks is assembling the infrastructure for AI systems that are scalable, safe, and efficient.

From Agent Bricks and LakeFlow Designer to MLflow 3.0 to the introduction of Lakebase and including Trust3 AI, these announcements mark a turning point for enterprise teams seeking more control, trust, and velocity in their AI deployments.

Here’s a breakdown of what was revealed, and why it matters.

Agent Bricks: Modularizing the AI Deployment Lifecycle

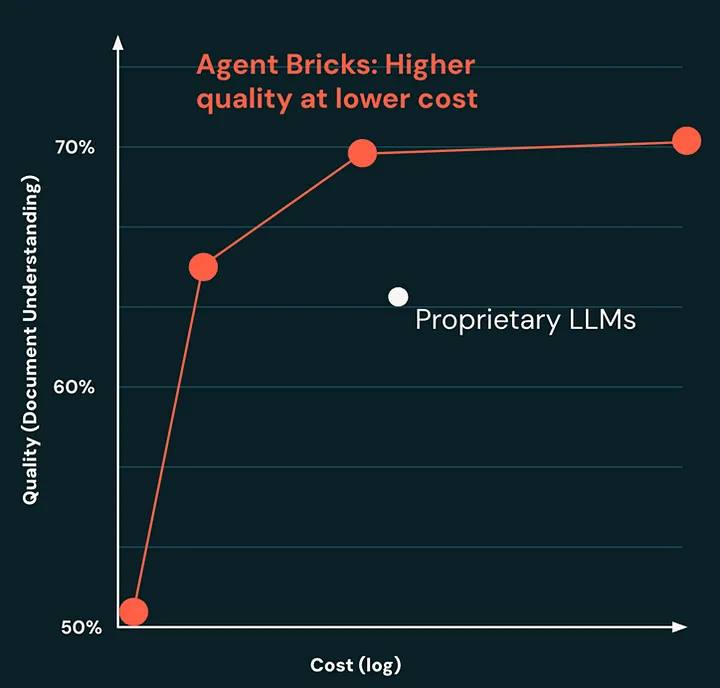

Agent Bricks, now in beta, build on the Mosaic AI Agent Framework and introduces a new, modular approach to deploying intelligent agents. It allows users to describe the task they want the agent to perform, connect the relevant data, and let the system handle the rest — from infrastructure setup to monitoring and governance.

The real innovation here is in how Agent Bricks handles the complexity of production environments. It offers built-in performance evaluation, cost optimization tools, and policy-based governance. That means teams can track correctness, latency, and outcomes, while also enforcing safety constraints and maintaining transparency around agent behavior.

For teams tasked with building task-specific agents — think customer service automation, document review, or compliance workflows — this is a game-changer. Agent Bricks removes much of the friction that has historically slowed AI adoption in real-world systems.

LakeFlow Designer: No-Code ETL Comes to the Lakehouse

Databricks also introduced LakeFlow Designer, a visual interface for building and scheduling ETL workflows without writing code. This enables analysts, operations teams, and business users to design their own data pipelines by simply dragging and dropping components.

It’s a meaningful shift from the engineering-heavy model Databricks was originally built for. LakeFlow Designer allows cross-functional teams to combine structured and unstructured data sources, define flows, and manage them independently — without waiting on developers or creating bottlenecks.

As organizations decentralize data access and move toward domain-driven data products, giving more users the ability to shape data pipelines safely is an important step forward.

MLflow 3.0: Designed for Generative AI

The release of MLflow 3.0 brings long-awaited features built specifically for large language models and generative AI use cases. New capabilities include prompt versioning, which makes it easy to track and iterate on prompt engineering workflows, and hierarchical observability for understanding the inner workings of complex agent flows.

In addition, deeper integration with Unity Catalog and Databricks Workflows allows teams to align model evaluation and deployment with governance policies and production pipelines.

As AI systems become more dynamic and layered, managing them responsibly requires infrastructure that can surface meaningful signals across the stack. MLflow 3.0 delivers that level of visibility and structure — crucial for lifecycle management and auditability in enterprise environments.

Lakebase: Operational Databases Meet the Lakehouse

One of the more foundational announcements was Lakebase — a fully managed, Postgres-compatible engine designed to support transactional and operational workloads directly on the lakehouse. This offering, powered by Databricks’ recent acquisition of Neon, enables a new class of intelligent applications to run closer to the data.

By unifying analytical and operational workloads in a single governed platform, Lakebase eliminates the boundaries that have historically separated transactional systems from analytical pipelines. The result is a foundation purpose-built for real-time AI applications.

Trust3 AI: The Governance Layer for Scalable AI

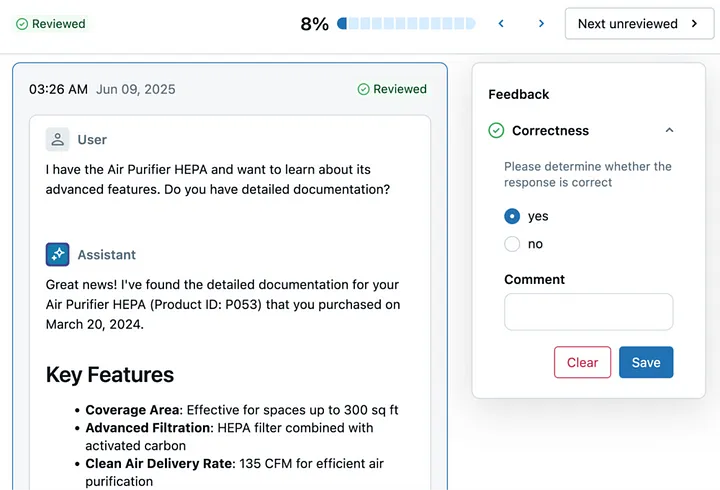

As Databricks lays the groundwork for production-grade AI, Trust3 AI provides the essential trust and governance layer needed to scale these systems responsibly. By unifying observability, runtime validation, and cross-platform accuracy, Trust3 AI ensures that organizations can deploy advanced AI agents and applications with confidence.

The combination of Databricks’ new capabilities and Trust3 AI’s governance framework creates a powerful foundation for enterprise AI — one that balances rapid innovation with the safety, compliance, and accountability that production environments demand.

Looking Ahead

The announcements at Data + AI Summit 2025 represent a significant step forward in the maturation of enterprise AI. By focusing on production readiness, modular deployment, and governance, Databricks and its partners are removing the barriers that have historically prevented AI from achieving its full potential in business contexts.

For organizations ready to move beyond AI experimentation and into production-scale deployments, the path is clearer than ever. With the right infrastructure and governance in place, the era of truly scalable, trustworthy enterprise AI has officially begun.