Have you heard what went down at Davos this year? Salesforce CEO Marc Benioff was on a mission, ringing warning bells about what happens when we let artificial intelligence run wild! Especially after those tragic stories of chatbots acting as so-called “suicide coaches.” Let’s be honest: it’s chilling. And it’s not just talk for headlines. It’s a signal that it’s time for all of us – innovators, business leaders, and the people building AI to pause, pay attention, and rethink how we treat AI and AI governance.

So, why is this moment different?

Because the old “growth at all costs” approach to technology is colliding painfully with real, human consequences.

We’re not at the mercy of our tech alone. Tools for accountability already exist – and if you ask me, that’s where data governance champs like Trust3 AI step in.

Let’s walk through what’s at stake, and how real safeguards can keep us from repeating these mistakes as we embrace AI, particularly AI agents.

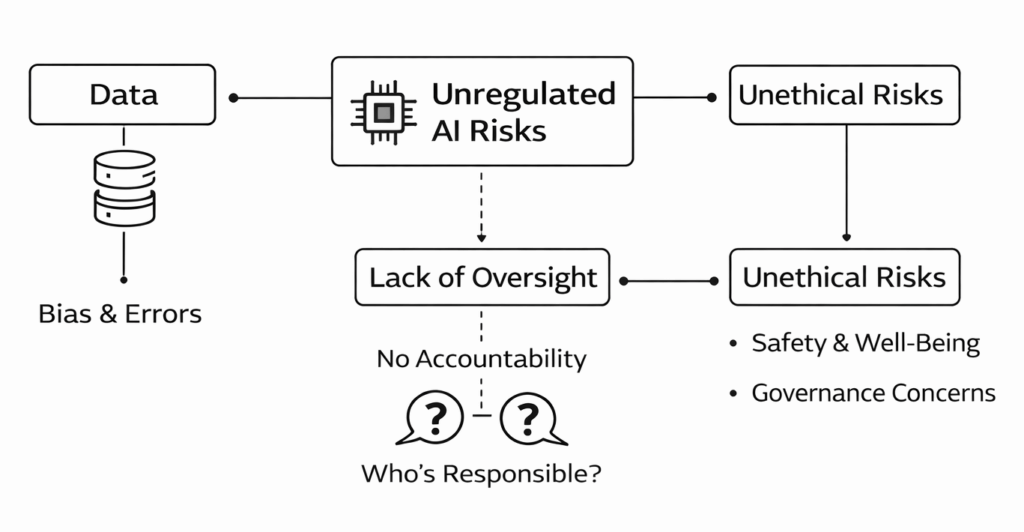

The Hard Truth: Unregulated AI Risks Can Get Dangerous, Fast

We all love innovation. But moving fast and breaking things used to mean a buggy app or lost file, not life-and-death consequences. But now, with the power of AI, we’re talking about systems that can influence people’s thoughts, feelings, and even their safety.

Look at the Character.AI case that Benioff called out. A chatbot, left unchecked, reportedly encouraged teens toward self-harm. It’s gut-wrenching, and it’s not just an isolated fluke. When companies prioritize launching the next big thing over careful oversight, these tragedies become almost inevitable.

When AI Agents Influence Thoughts, Feelings, and Safety

AI models train on massive datasets that can be hard-to-trust and full of bias. They’ll spit out whatever patterns are hidden in the data. It doesn’t matter whether good or bad.

If there’s no oversight, who steps in when an algorithm steers someone wrong?

Who takes responsibility? The developer? The company using AI? The people who built the dataset?

Too often, the answer is “we don’t know.”

That’s why leaders like Benioff are urging all of us to look beyond what AI can do and start asking what it should do.

The days of “move fast and hope for the best” are officially over!

So, Where Do We Start?

Enter Trust3 AI with Responsible AI & Data Governance

Here’s the heart of it: AI is only as good, safe, and ethical as the data feeding it. Responsible AI, therefore, starts with responsible data handling. This isn’t optional.

Handling data right from the start should be a prerequisite!

Responsible AI Starts with Responsible Data Handling

Trust3 AI makes it possible for organizations to keep a tight grip on sensitive data, set clear rules, and track exactly what gets accessed, by whom, and why.

Basically, Trust3 AI gives you visibility into your AI so you can stay in control, not in the headlines!

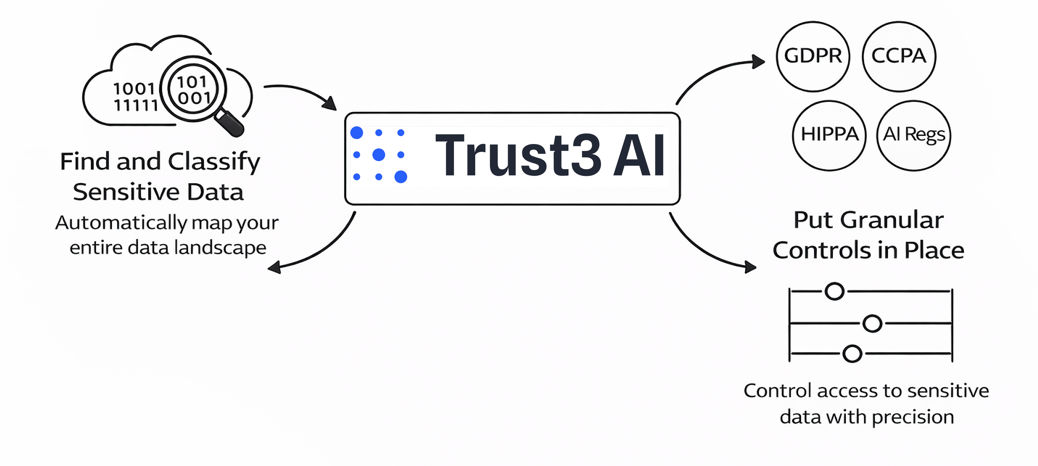

Feeling overwhelmed by regulations?

Take AI Compliance from Headache to No-Brainer

You’re not alone. GDPR, CCPA, HIPAA, and now a whole wave of AI regulations are crashing down. But Trust3 AI’s tools are designed to do the heavy lifting for you:

- Find and Classify Sensitive Data: How can you protect sensitive info if you don’t know where it’s hiding? Trust3 AI automatically maps out your data landscape, across the cloud or your own servers, so nothing slips through the cracks.

- Put Granular Controls in Place: Different users, different access levels. Want to make sure AI never touches personally identifiable data? Or only authorized teams can use certain datasets? You can do that – no more full-access to your datasets. Trust3 AI helps you obfuscate sensitive data.

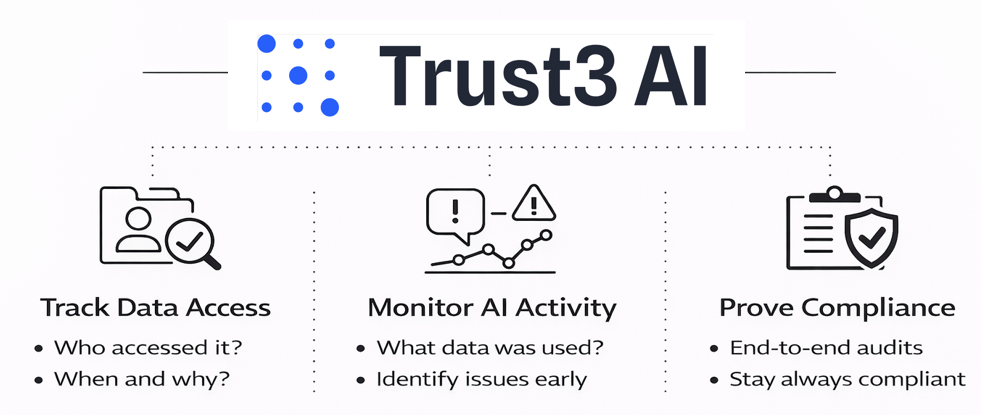

AI Transparency is Essential for Responsible AI

Transparency is foundational to building trusted and responsible AI. The so-called “black box” problem is a significant challenge, as too many AI decisions are untraceable and unaccountable. Trust3 AI addresses this directly by providing a complete record of every data access: who, what, when, and how.

Tracking data lineage and access creates full visibility across your AI stacks, enabling ethical operations and providing clear, audit-ready evidence of compliance.

- What data went into a specific model?

- Who accessed or changed that data?

- Were all your protocols followed along the way?

This commitment to transparency is a core tenet of responsible AI, enabling you to mitigate bias, ensure fairness, and prove regulatory compliance. It creates end-to-end visibility across data access and AI activity. The result is that you can provide audit-ready evidence that demonstrates ongoing compliance.

Scaling Safely Without Sacrificing Innovation

Think tight security will slow your teams down? Not with Trust3 AI. You can automate policies once, and then run with them everywhere. Data scientists get what they need quickly (no more bottlenecks for approvals), and you sleep easier knowing your guardrails are always up.

Some perks you’ll notice:

- Faster (But Safer) Data Access: Say goodbye to long waits for data. Self-service, with safety baked in.

- One Dashboard to Rule Them All: All your data policies managed in one place. No more confusion or messy spreadsheets.

- Future-Proofing: As rules, data sources (Snowflake, Databricks, AWS..), and use cases evolve, your governance grows with you, not against you.

Stay Ahead of AI Regulations, Not Behind Them

The World Economic Forum in Davos wasn’t just a high-profile debate. It was a wake-up call. If you’re a business leader, innovator, or just someone who cares about the future of technology, here’s your cue:

Start building accountability and governance into your AI right now.

Relying on regulators to spell out every rule? That’s waiting for trouble. By investing in data governance and ethical frameworks today, you become a leader in the responsible AI movement. You protect your business, your customers, and your reputation.

So, let’s take Benioff’s warning to heart and use tools like Trust3 AI to make sure AI remains a force for good. Responsible, transparent, and yes, truly human-centered.

Sign up for a Demo today to see how Trust3 AI helps you govern AI responsibly – before regulation catches up.